Software vulnerabilities are being discovered and exploited faster than ever before. In 2024, threat actors began weaponizing AI to scan codebases and find exploitable flaws in hours — work that once took days or weeks. Meanwhile, the average time to exploit a newly disclosed vulnerability dropped to just five days (Cybersecurity Ventures, 2024). For security teams already stretched thin, this asymmetry is dangerous.

Traditional static analysis tools have long been a cornerstone of application security (AppSec), but they carry well-known limitations: high false positive rates, pattern-matching blind spots, and an inability to reason about complex logic flows. As codebases grow and development cycles accelerate, these gaps are widening.

AI-powered vulnerability scanning is emerging as a practical response. Claude Code Security, developed by Anthropic, represents a new class of tool that uses large language models to reason about code behavior rather than simply match signatures. In this post, you'll learn how this approach works, where it fits into existing security workflows, and what security and development teams need to understand before adopting AI-assisted code analysis.

Why Traditional Static Analysis Is No Longer Enough

Static analysis tools have served security teams well for decades. But the threat landscape has shifted dramatically, and legacy approaches are showing their age.

The False Positive Problem

Security teams using traditional Static Application Security Testing (SAST) tools routinely report false positive rates exceeding 50% (NIST, 2024). Every false alert costs time — time that analysts spend triaging noise instead of remediating real risks. In high-velocity development environments, this overhead becomes unsustainable.

Pattern-based scanners excel at catching known vulnerability signatures, such as SQL injection patterns or hardcoded credentials. They struggle significantly with:

- Logic flaws that span multiple files or functions

- Context-dependent vulnerabilities that only emerge under specific conditions

- Insecure design patterns that don't match any predefined rule

- Chained vulnerabilities that individually appear benign

AI-Enabled Attackers Are Raising the Stakes

Threat actors are not waiting for defenders to catch up. Adversarial use of AI now includes automated vulnerability discovery, exploit generation, and targeted reconnaissance at scale. Security teams that rely solely on reactive, signature-based defenses face an increasingly asymmetric fight.

This dynamic — AI-enabled offense outpacing AI-limited defense — is precisely what tools like Claude Code Security are designed to address.

How Claude Code Security Works: AI That Reasons About Code

Rather than scanning for known patterns, Claude Code Security applies Claude's language models to reason about what code actually does. This distinction matters enormously in practice.

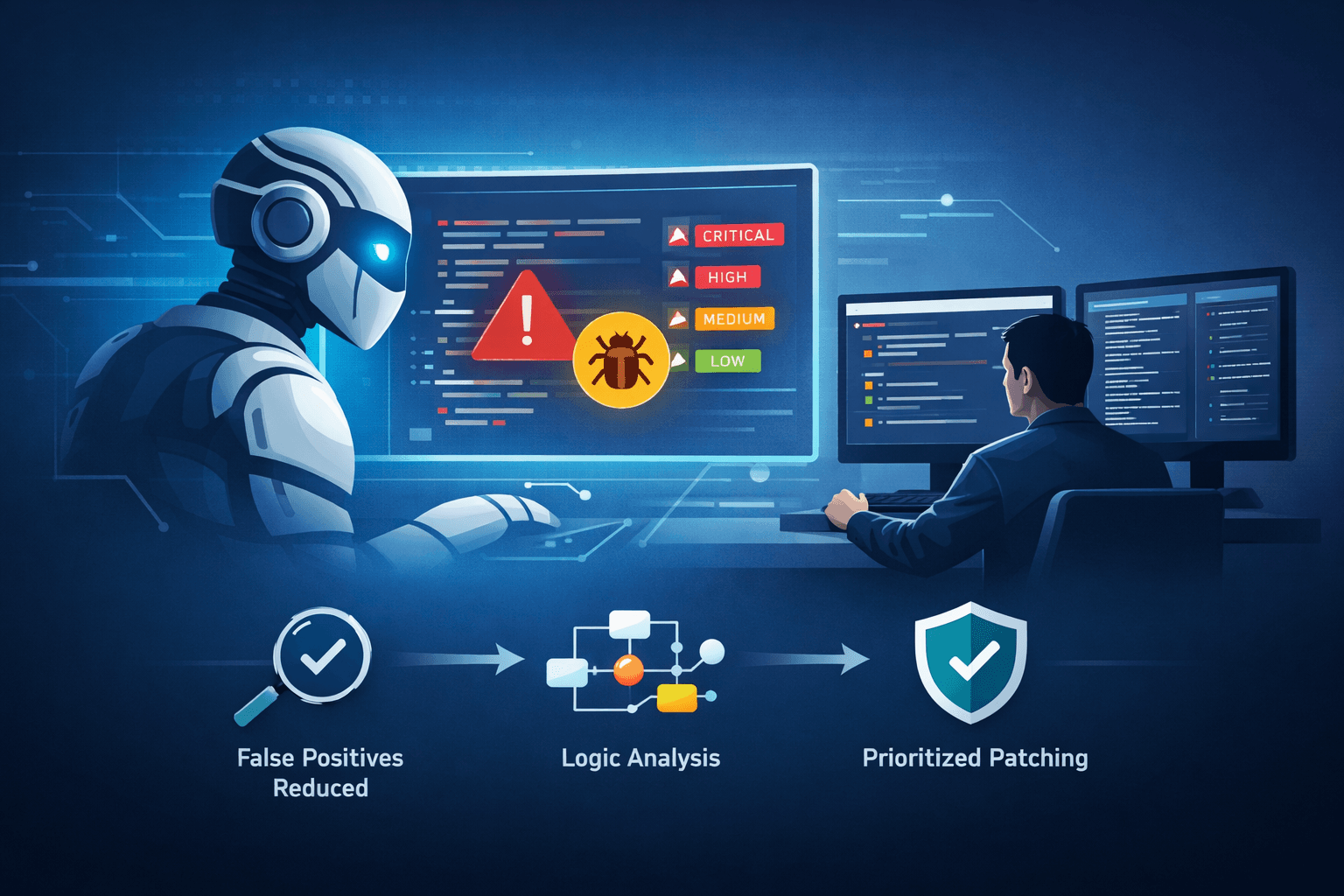

Multi-Stage Verification and Confidence Scoring

One of the core design goals is reducing false positives through multi-stage verification. When the system identifies a potential vulnerability, it applies confidence scoring before surfacing the finding. Lower-confidence results are filtered or flagged differently from high-confidence findings, allowing security teams to prioritize effectively.

This approach addresses one of the primary complaints about traditional SAST: alert fatigue. By the time a finding reaches a developer's queue, it has already passed through a reasoning layer designed to assess whether the issue is genuinely exploitable in context.

Severity Prioritization Across Large Repositories

Complex, large-scale repositories present a practical challenge for manual review — there is simply too much code to assess thoroughly. Claude Code Security is designed to analyze these environments at scale, then surface findings ranked by severity.

Table: Vulnerability Severity Tiers and Response Guidance

| Severity Level | CVSS Score Range | Typical Finding Type | Recommended Response Time |

|---|---|---|---|

| Critical | 9.0 – 10.0 | RCE, auth bypass | Immediate (< 24 hours) |

| High | 7.0 – 8.9 | Privilege escalation, data exposure | < 72 hours |

| Medium | 4.0 – 6.9 | Insecure configuration, weak crypto | Scheduled sprint |

| Low | 0.1 – 3.9 | Code quality, informational | Backlog review |

Severity ratings align with established frameworks like the Common Vulnerability Scoring System (CVSS) and support compliance documentation requirements under standards such as PCI DSS, HIPAA, and SOC 2 Type II.

Integrating AI Vulnerability Scanning Into Your Security Workflow

Adopting a new security tool only creates value if it fits into the way your teams already work. Claude Code Security is designed with integration as a first-class concern.

CI/CD Pipeline Integration

The most effective deployment model embeds vulnerability scanning directly into Continuous Integration/Continuous Deployment (CI/CD) pipelines. When scanning runs automatically on every pull request or code commit, security checks become part of the development process rather than a gate added at the end.

Pro Tip: Configure pipeline thresholds to block merges only on critical and high-severity findings. Routing medium and low findings to a dashboard review queue prevents bottlenecks while still maintaining visibility.

Code Review and Human-in-the-Loop Approval

Anthropic's design philosophy for this tool emphasizes a human-in-the-loop model. When the system generates patch suggestions for identified vulnerabilities, those suggestions require review and approval from a developer or security engineer before they are applied.

This is not a limitation — it is a sound security principle. Automated patch application without human review introduces its own risks, including unintended behavior changes and dependency conflicts.

Table: AI-Assisted vs. Traditional Vulnerability Scanning Comparison

| Capability | Pattern-Based SAST | AI-Powered Scanning |

|---|---|---|

| Logic flaw detection | Limited | Strong |

| False positive rate | High (often 50%+) | Reduced via confidence scoring |

| Patch suggestion | Rarely available | Generated for many findings |

| Large codebase analysis | Slow, incomplete | Designed for scale |

| Workflow integration | Mature ecosystem | CI/CD and review integration |

| Human approval required | Varies | Enforced by design |

Using the Centralized Dashboard for Team-Wide Visibility

Security findings scattered across individual developer inboxes are findings that fall through the cracks. A centralized dashboard changes the operational model for AppSec teams.

Aggregating Findings Across Services and Codebases

In microservices architectures or organizations with multiple active repositories, visibility is a genuine challenge. Claude Code Security's dashboard is designed to aggregate findings across services, giving platform and security teams a unified view of risk.

This supports several compliance and governance requirements. Under ISO 27001 and NIST SP 800-53, organizations must demonstrate continuous monitoring and documented risk management processes. A centralized findings dashboard provides an audit trail that satisfies these controls.

Tracking Remediation Progress

Visibility into open vulnerabilities is only useful if teams can also track whether those vulnerabilities are being addressed. Dashboard functionality that maps findings to remediation status enables security leads to:

- Identify stale findings that have exceeded acceptable exposure windows

- Monitor mean time to remediation (MTTR) as a security performance metric

- Flag recurring vulnerability types that indicate systemic code quality issues

- Report security posture to leadership without manual aggregation

Positioning AI Code Security Within a Defense-in-Depth Strategy

Important: AI-powered scanning augments your existing security stack — it does not replace it. Anthropic explicitly frames Claude Code Security as a complement to traditional static analysis tools, not a replacement.

Where AI Scanning Fits in the Security Toolchain

A mature AppSec program typically layers multiple control types. Here is where AI-powered code analysis fits within a defense-in-depth architecture:

Table: AppSec Toolchain Layers and AI Scanning Integration

| Layer | Tool Type | Role | AI Scanning Contribution |

|---|---|---|---|

| Development | IDE plugins, linters | Real-time feedback | Augments with reasoning |

| CI/CD | SAST, SCA, secrets detection | Pre-merge gates | High-confidence findings |

| Staging | DAST, penetration testing | Runtime behavior | Complements static findings |

| Production | SIEM, runtime protection | Active monitoring | Feeds risk prioritization |

Aligning With MITRE ATT&CK and CIS Controls

Organizations using MITRE ATT&CK for threat modeling can map AI-detected code vulnerabilities to specific techniques and tactics. This alignment helps prioritize remediation based on what real adversaries are actively exploiting, not just theoretical risk scores.

CIS Controls v8 identifies secure software development practices (Control 16) as a core implementation group. Integrating AI-powered scanning into your development workflow directly supports compliance with this control.

Key Takeaways

- Integrate AI-powered scanning into CI/CD pipelines to catch vulnerabilities before code reaches production

- Use confidence scoring and severity ratings to prioritize remediation and reduce alert fatigue

- Maintain human-in-the-loop review for all AI-generated patch suggestions before applying changes

- Leverage centralized dashboards to track remediation progress and support compliance documentation

- Treat AI code scanning as a complement to SAST, DAST, and penetration testing — not a replacement

- Align findings with MITRE ATT&CK techniques to prioritize what adversaries are actively exploiting

Conclusion

The gap between how fast attackers find vulnerabilities and how fast defenders remediate them is a defining challenge in modern security. AI-powered vulnerability scanning represents a meaningful step toward closing that gap — not by replacing human judgment, but by augmenting it with reasoning capabilities that pattern-based tools cannot match.

Claude Code Security reflects a broader shift in how security teams can use AI: not as an autonomous decision-maker, but as a capable analyst that surfaces prioritized, contextualized findings and proposes solutions for human review. For organizations managing complex codebases, high-velocity development, or stringent compliance requirements, this model offers real operational value. The next step is evaluating where AI-assisted scanning fits within your existing AppSec program and identifying the workflow integrations that will deliver the most immediate risk reduction.

Frequently Asked Questions

Q: Can AI-powered code scanning replace traditional SAST tools? A: No — AI-powered scanning is designed to augment traditional static analysis, not replace it. Pattern-based SAST tools remain effective for known vulnerability signatures and have a mature integration ecosystem, while AI scanning adds reasoning capability for logic flaws and complex vulnerabilities.

Q: How does confidence scoring reduce false positives in AI vulnerability scanning? A: Confidence scoring applies a multi-stage verification process before surfacing a finding, assessing whether a detected issue is genuinely exploitable in its specific context. This filters out many of the ambiguous matches that cause high false positive rates in traditional scanners, so your team spends time on findings that matter.

Q: Is it safe to allow AI to automatically apply patch suggestions to production code? A: Automated patch application without human review introduces risk, including unintended behavior changes and dependency conflicts. The recommended approach — and the model Anthropic enforces — requires a developer or security engineer to review and approve any AI-generated patch before it is applied.

Q: How does AI code security support compliance with frameworks like SOC 2 or PCI DSS? A: Centralized dashboards provide documented evidence of continuous vulnerability monitoring and remediation tracking, which directly supports audit requirements under SOC 2 Type II, PCI DSS Requirement 6, and ISO 27001 controls. Severity ratings also help demonstrate risk-based prioritization, a key expectation in most compliance frameworks.

Q: What types of vulnerabilities are AI-powered scanners best suited to detect? A: AI scanners excel at identifying logic flaws, context-dependent vulnerabilities, and insecure design patterns that span multiple files or functions — precisely the categories that pattern-based tools struggle with. They are particularly effective in large, complex repositories where manual review is impractical and where chained vulnerabilities may not trigger traditional rule-based alerts.

Enjoyed this article?

Subscribe for more cybersecurity insights.